这篇博文是前两篇博文的延续,是对环境的更新。

基于MNIST手写识别安装,称为坑

主要起点:

以上两篇博文中程序运行的环境实际上并没有用到GPU。这篇博文介绍了如何利用 GPU。

首先,通过pip重新安装一个支持gpu的GPU,同样的方法。

[root@bogon tensorflow]# pip install --upgrade tensorflow-gpu Collecting tensorflow-gpu Downloading tensorflow_gpu-1.0.1-cp27-cp27mu-manylinux1_x86_64.whl (94.8MB) 100% |████████████████████████████████| 94.8MB 9.6kB/s Requirement already up-to-date: protobuf>=3.1.0 in /usr/lib64/python2.7/site-packages (from tensorflow-gpu) Requirement already up-to-date: six>=1.10.0 in /usr/lib/python2.7/site-packages (from tensorflow-gpu) Requirement already up-to-date: wheel in /usr/lib/python2.7/site-packages (from tensorflow-gpu) Requirement already up-to-date: mock>=2.0.0 in /usr/lib/python2.7/site-packages (from tensorflow-gpu) Requirement already up-to-date: numpy>=1.11.0 in /usr/lib64/python2.7/site-packages (from tensorflow-gpu) Requirement already up-to-date: setuptools in /usr/lib/python2.7/site-packages (from protobuf>=3.1.0->tensorflow-gpu) Requirement already up-to-date: funcsigs>=1; python_version < "3.3" in /usr/lib/python2.7/site-packages (from mock>=2.0.0->tensorflow-gpu) Requirement already up-to-date: pbr>=0.11 in /usr/lib/python2.7/site-packages (from mock>=2.0.0->tensorflow-gpu) Requirement already up-to-date: appdirs>=1.4.0 in /usr/lib/python2.7/site-packages (from setuptools->protobuf>=3.1.0->tensorflow-gpu) Requirement already up-to-date: packaging>=16.8 in /usr/lib/python2.7/site-packages (from setuptools->protobuf>=3.1.0->tensorflow-gpu) Requirement already up-to-date: pyparsing in /usr/lib/python2.7/site-packages (from packaging>=16.8->setuptools->protobuf>=3.1.0->tensorflow-gpu) Installing collected packages: tensorflow-gpu Successfully installed tensorflow-gpu-1.0.1

过程成功完成。

![图片[1]-Tensorflow安装环境更新 – shihuc-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194891711_0.jpg)

然后,运行MNIST手写识别程序,验证GPU是否开启。

[root@bogon tensorflow]# python mnist_demo1.py I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcublas.so.8.0 locally I tensorflow/stream_executor/dso_loader.cc:126] Couldn't open CUDA library libcudnn.so.5. LD_LIBRARY_PATH: /usr/local/cuda-8.0/lib64: I tensorflow/stream_executor/cuda/cuda_dnn.cc:3517] Unable to load cuDNN DSO I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcufft.so.8.0 locally I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcuda.so.1 locally I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcurand.so.8.0 locally Extracting MNIST_data/train-images-idx3-ubyte.gz Extracting MNIST_data/train-labels-idx1-ubyte.gz Extracting MNIST_data/t10k-images-idx3-ubyte.gz Extracting MNIST_data/t10k-labels-idx1-ubyte.gz W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE3 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.1 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX2 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use FMA instructions, but these are available on your machine and could speed up CPU computations. I tensorflow/core/common_runtime/gpu/gpu_device.cc:885] Found device 0 with properties: name: GeForce GTX 1080 major: 6 minor: 1 memoryClockRate (GHz) 1.7335 pciBusID 0000:82:00.0Total memory: 7.92GiB Free memory: 7.81GiB I tensorflow/core/common_runtime/gpu/gpu_device.cc:906] DMA: 0 I tensorflow/core/common_runtime/gpu/gpu_device.cc:916] 0: Y I tensorflow/core/common_runtime/gpu/gpu_device.cc:975] Creating TensorFlow device (/gpu:0) -> (device: 0, name: GeForce GTX 1080, pci bus id: 0000:82:00.0) F tensorflow/stream_executor/cuda/cuda_dnn.cc:222] Check failed: s.ok() could not find cudnnCreate in cudnn DSO; dlerror: /usr/lib/python2.7/site-packages/tensorflow/python/_pywrap_tensorflow.so: undefined symbol: cudnnCreate Aborted (core dumped)

上面红色部分报错。找不到cudnn的so文件。去cuda的安装路径看看有没有这个。

[root@bogon lib64]# ll libcudnn libcudnn.so.5.1 libcudnn.so.5.1.5 libcudnn_static.a

确实没有.so.5文件。

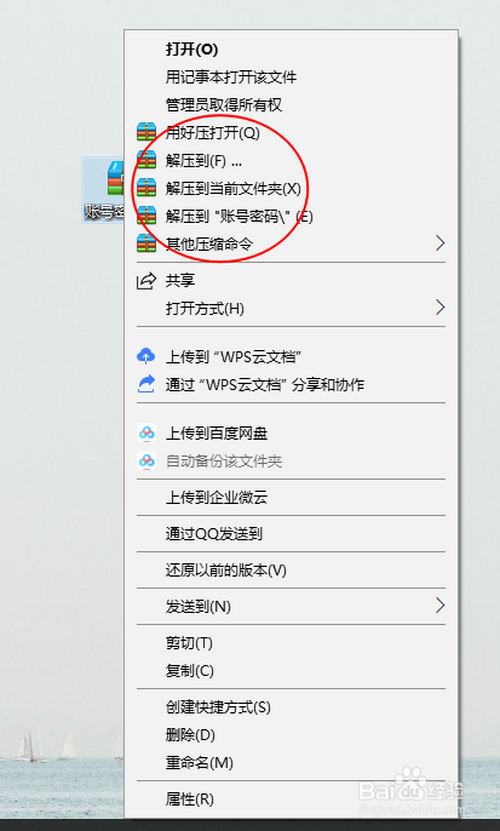

接下来,建立软链接并将.so.5指向.so.5.1.

[root@bogon lib64]# ln -s libcudnn.so.5.1 libcudnn.so.5 [root@bogon lib64]# ll libcudnn* lrwxrwxrwx. 1 root root 15 Mar 23 16:58 libcudnn.so.5 -> libcudnn.so.5.1 lrwxrwxrwx. 1 root root 17 Mar 20 17:12 libcudnn.so.5.1 -> libcudnn.so.5.1.5 -rwxr-xr-x. 1 root root 79337624 Mar 20 17:11 libcudnn.so.5.1.5 -rw-r--r--. 1 root root 69756172 Mar 20 17:12 libcudnn_static.a

现在,有这个 .so.5 文件。

再次验证mnist的手写识别程序。

[root@bogon tensorflow]# python mnist_demo1.py I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcublas.so.8.0 locallyI tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcudnn.so.5 locally I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcufft.so.8.0 locally I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcuda.so.1 locally I tensorflow/stream_executor/dso_loader.cc:135] successfully opened CUDA library libcurand.so.8.0 locally Extracting MNIST_data/train-images-idx3-ubyte.gz Extracting MNIST_data/train-labels-idx1-ubyte.gz Extracting MNIST_data/t10k-images-idx3-ubyte.gz Extracting MNIST_data/t10k-labels-idx1-ubyte.gz W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE3 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.1 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX2 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use FMA instructions, but these are available on your machine and could speed up CPU computations. I tensorflow/core/common_runtime/gpu/gpu_device.cc:885] Found device 0 with properties: name: GeForce GTX 1080 major: 6 minor: 1 memoryClockRate (GHz) 1.7335 pciBusID 0000:82:00.0 Total memory: 7.92GiB Free memory: 7.81GiB I tensorflow/core/common_runtime/gpu/gpu_device.cc:906] DMA: 0 I tensorflow/core/common_runtime/gpu/gpu_device.cc:916] 0: Y

I tensorflow/core/common_runtime/gpu/gpu_device.cc:975] Creating TensorFlow device (/gpu:0) -> (device: 0, name: GeForce GTX 1080, pci bus id: 0000:82:00.0) 0.9092

到目前为止,我的运行时环境已经是基于 GPU 的。

下面附上测试中.py的内容:

#!/usr/bin/env python # -*- coding: utf-8 -*- import tensorflow as tf import tensorflow.examples.tutorials.mnist.input_data as input_data mnist = input_data.read_data_sets("MNIST_data", one_hot=True) sess = tf.InteractiveSession() x = tf.placeholder("float", shape=[None, 784]) y_ = tf.placeholder("float", shape=[None, 10]) w = tf.Variable(tf.zeros([784,10])) b = tf.Variable(tf.zeros([10])) init = tf.global_variables_initializer() sess.run(init)y = tf.nn.softmax(tf.matmul(x, w) + b) cross_entropy = -tf.reduce_sum(y_*tf.log(y)) train_step = tf.train.GradientDescentOptimizer(0.01).minimize(cross_entropy) for i in range(1000): batch = mnist.train.next_batch(50) train_step.run(feed_dict={x: batch[0], y_: batch[1]}) correct_prediction = tf.equal(tf.argmax(y,1), tf.argmax(y_,1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float")) print accuracy.eval(feed_dict={x: mnist.test.images, y_: mnist.test.labels})

作为最后一点,以上部分:

W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE3 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.1 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX2 instructions, but these are available on your machine and could speed up CPU computations. W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use FMA instructions, but these are available on your machine and could speed up CPU computations.

暂时没有关注。我知道的解决方案是使用bazel安装源代码来解决这个问题。由于对实验影响不大,暂时不关注。

© 版权声明

本站下载的源码均来自公开网络收集转发二次开发而来,

若侵犯了您的合法权益,请来信通知我们1413333033@qq.com,

我们会及时删除,给您带来的不便,我们深表歉意。

下载用户仅供学习交流,若使用商业用途,请购买正版授权,否则产生的一切后果将由下载用户自行承担,访问及下载者下载默认同意本站声明的免责申明,请合理使用切勿商用。

THE END

![图片[2]-Tensorflow安装环境更新 – shihuc-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194891711_1.jpg)

![图片[3]-Tensorflow安装环境更新 – shihuc-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194891711_2.jpg)

![图片[4]-Tensorflow安装环境更新 – shihuc-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194891711_3.jpg)

![图片[5]-Tensorflow安装环境更新 – shihuc-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194891711_4.gif)

暂无评论内容