经过两个多星期的努力,终于写出了RCNN代码。这段代码很有意思,还复习了几个应用知识点。因此,我将总结并与您分享我的经验。在理论方面,关于 RCNN 的理论教程很多。我不会在这里详细解释。有兴趣的朋友可以看看这个博客来了解一下。

系统概述

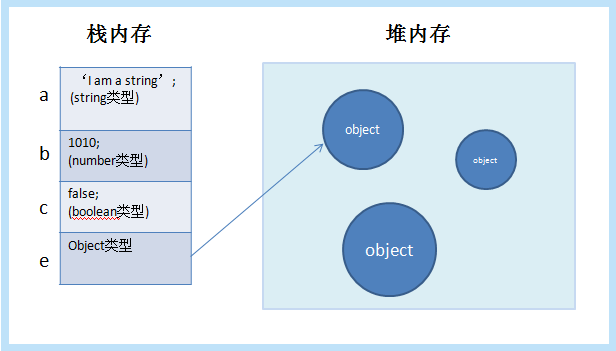

RCNN 的逻辑是基于模型的。为了提高模型的物体识别率,在图片经过CNN处理之前,通过传统算法得到大约2000个疑似物体框(本文使用的算法就是算法)。之后将这些疑似框导入CNN系统,获取输出层上一层的特征,利用训练好的svm来区分物体。其中,比较有趣的部分包括训练后的fine tune、fine tune后帧中输出层前最后一层特征点的提取、svm分类器的训练。接下来,让我们看看如何实现这个模型!

代码分析

为了写的方便,这里使用了一个库来写。详情请点击此处查看其官网。

那我们先来看看系统流程:

第一步,训练,这里我们使用upper-。该项目将用于学习数据库,这是一个区分不同种类花卉的项目。提供的代码的所有功能作者都写得很仔细,但是作者没有写主要写法和模型是否支持断点持续训练等,这里是我的代码:

def train(network, X, Y):

# Training

model = tflearn.DNN(network, checkpoint_path='model_alexnet',

max_checkpoints=1, tensorboard_verbose=2, tensorboard_dir='output')

# 这里增加了读取存档的模式。如果已经有保存了的模型,我们当然就读取它然后继续

# 训练了啊!

if os.path.isfile('model_save.model'):

model.load('model_save.model')

model.fit(X, Y, n_epoch=100, validation_set=0.1, shuffle=True,

show_metric=True, batch_size=64, snapshot_step=200,

snapshot_epoch=False, run_id='alexnet_oxflowers17') # epoch = 1000

# Save the model

# 这里是保存已经运算好了的模型

model.save('model_save.model')

同时,我们希望能够检测模型是否正常工作。以下是检测代码

# 预处理图片函数:

# ------------------------------------------------------------------------------------------------

# 首先,读取图片,形成一个Image文件

def load_image(img_path):

img = Image.open(img_path)

return img

# 将Image文件给修改成224 * 224的图片大小(当然,RGB三个频道我们保持不变)

def resize_image(in_image, new_width, new_height, out_image=None,

resize_mode=Image.ANTIALIAS):

img = in_image.resize((new_width, new_height), resize_mode)

if out_image:

img.save(out_image)

return img

# 将Image加载后转换成float32格式的tensor

def pil_to_nparray(pil_image):

pil_image.load()

return np.asarray(pil_image, dtype="float32")

# 网络框架函数:

# ------------------------------------------------------------------------------------------------

def create_alexnet(num_classes):

# Building 'AlexNet'

network = input_data(shape=[None, 224, 224, 3])

network = conv_2d(network, 96, 11, strides=4, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = conv_2d(network, 256, 5, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = conv_2d(network, 384, 3, activation='relu')

network = conv_2d(network, 384, 3, activation='relu')

network = conv_2d(network, 256, 3, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = fully_connected(network, 4096, activation='tanh')

network = dropout(network, 0.5)

network = fully_connected(network, 4096, activation='tanh')

network = dropout(network, 0.5)

network = fully_connected(network, num_classes, activation='softmax')

network = regression(network, optimizer='momentum',

loss='categorical_crossentropy',

learning_rate=0.001)

return network

# 我们就是用这个函数来推断输入图片的类别的

def predict(network, modelfile,images):

model = tflearn.DNN(network)

model.load(modelfile)

return model.predict(images)

if __name__ == '__main__':

img_path = 'testimg7.jpg'

imgs = []

img = load_image(img_path)

img = resize_image(img, 224, 224)

imgs.append(pil_to_nparray(img))

net = create_alexnet(17)

predicted = predict(net, 'model_save.model',imgs)

print(predicted)

到目前为止,我们与 RCNN 没有直接关系。不过值得注意的是,我们之前保存的训练模型.model文件是我们的预训练。那么现在,我们开始正式制作RCNN系统,我们先写传统的框架代码。

由于文中使用的算法是 ,我对这个算法没有个人经验,所以从头开始写是非常耗时的。这里我偷懒,用现成的库来完成。那么,预处理代码的重点是另一个概念,就是IOU的概念,或者union。这个概念在这里非常有用的原因是,当我们手动标记一张图片时,我们通常只为途中的某个物体标记它,其余的我们都算作背景。在这个概念下,如果计算机一次选择多个可能的项目框,我们如何决定哪个框对应于对象?对于完全不重叠的框,我们自然会认为不是物体而是背景,但是那些重叠的框怎么分类呢?我们这里使用IOU概念,即如果重叠值超过一个阀门值,我们将其标记为物体类别,其他情况,我们将框标记为背景。更详细的解释请点击这里。

那么我们如何在代码中实现这个IOU呢?

# IOU Part 1

def if_intersection(xmin_a, xmax_a, ymin_a, ymax_a, xmin_b, xmax_b, ymin_b, ymax_b):

if_intersect = False

# 通过四条if来查看两个方框是否有交集。如果四种状况都不存在,我们视为无交集

if xmin_a < xmax_b <= xmax_a and (ymin_a < ymax_b <= ymax_a or ymin_a <= ymin_b < ymax_a):

if_intersect = True

elif xmin_a <= xmin_b < xmax_a and (ymin_a < ymax_b <= ymax_a or ymin_a <= ymin_b < ymax_a):

if_intersect = True

elif xmin_b < xmax_a <= xmax_b and (ymin_b < ymax_a <= ymax_b or ymin_b <= ymin_a < ymax_b):

if_intersect = True

elif xmin_b <= xmin_a < xmax_b and (ymin_b < ymax_a <= ymax_b or ymin_b <= ymin_a < ymax_b):

if_intersect = True

else:

return False

# 在有交集的情况下,我们通过大小关系整理两个方框各自的四个顶点, 通过它们得到交集面积

if if_intersect == True:

x_sorted_list = sorted([xmin_a, xmax_a, xmin_b, xmax_b])

y_sorted_list = sorted([ymin_a, ymax_a, ymin_b, ymax_b])

x_intersect_w = x_sorted_list[2] - x_sorted_list[1]

y_intersect_h = y_sorted_list[2] - y_sorted_list[1]

area_inter = x_intersect_w * y_intersect_h

return area_inter

# IOU Part 2

def IOU(ver1, vertice2):

# vertices in four points

# 整理输入顶点

vertice1 = [ver1[0], ver1[1], ver1[0]+ver1[2], ver1[1]+ver1[3]]

area_inter = if_intersection(vertice1[0], vertice1[2], vertice1[1], vertice1[3], vertice2[0], vertice2[2], vertice2[1], vertice2[3])

# 如果有交集,计算IOU

if area_inter:

area_1 = ver1[2] * ver1[3]

area_2 = vertice2[4] * vertice2[5]

iou = float(area_inter) / (area_1 + area_2 - area_inter)

return iou

return False

之后,我们可以在微调时使用 0.5 作为 IOU,在训练 SVM 3 时使用 0.。实现这个思路的函数如下:

# Read in data and save data for Alexnet

def load_train_proposals(datafile, num_clss, threshold = 0.5, svm = False, save=False, save_path='dataset.pkl'):

train_list = open(datafile,'r')

labels = []

images = []

for line in train_list:

tmp = line.strip().split(' ')

# tmp0 = image address

# tmp1 = label

# tmp2 = rectangle vertices

img = skimage.io.imread(tmp[0])

# python的selective search函数

img_lbl, regions = selectivesearch.selective_search(img, scale=500, sigma=0.9, min_size=10)

candidates = set()

for r in regions:

# excluding same rectangle (with different segments)

# 剔除重复的方框

if r['rect'] in candidates:

continue

# 剔除太小的方框

if r['size'] < 220:

continue

# resize to 224 * 224 for input

# 重整方框的大小

proposal_img, proposal_vertice = clip_pic(img, r['rect'])

# Delete Empty array

# 如果截取后的图片为空,剔除

if len(proposal_img) == 0:

continue

# Ignore things contain 0 or not C contiguous array

x, y, w, h = r['rect']

# 长或宽为0的方框,剔除

if w == 0 or h == 0:

continue

# Check if any 0-dimension exist

# image array的dim里有0的,剔除

[a, b, c] = np.shape(proposal_img)

if a == 0 or b == 0 or c == 0:

continue

im = Image.fromarray(proposal_img)

resized_proposal_img = resize_image(im, 224, 224)

candidates.add(r['rect'])

img_float = pil_to_nparray(resized_proposal_img)

images.append(img_float)

# 计算IOU

ref_rect = tmp[2].split(',')

ref_rect_int = [int(i) for i in ref_rect]

iou_val = IOU(ref_rect_int, proposal_vertice)

# labels, let 0 represent default class, which is background

index = int(tmp[1])

if svm == False:

label = np.zeros(num_clss+1)

if iou_val < threshold:

label[0] = 1

else:

label[index] = 1

labels.append(label)

else:

if iou_val < threshold:

labels.append(0)

else:

labels.append(index)

if save:

pickle.dump((images, labels), open(save_path, 'wb'))

return images, labels

需要注意的是,当输入参数的svm为True时,我们不需要用一个热标签的方式来表示。

对输入图像进行预处理后,我们需要使用预处理后的图像集进行微调。

# Use a already trained alexnet with the last layer redesigned

# 这里定义了我们的Alexnet的fine tune框架。按照原文,我们需要丢弃alexnet的最后一层,即softmax

# 然后换上一层新的softmax专门针对新的预测的class数+1(因为多出了个背景class)。具体方法为设

# restore为False,这样在最后一层softmax处,我不restore任何数值。

def create_alexnet(num_classes, restore=False):

# Building 'AlexNet'

network = input_data(shape=[None, 224, 224, 3])

network = conv_2d(network, 96, 11, strides=4, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = conv_2d(network, 256, 5, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = conv_2d(network, 384, 3, activation='relu')

network = conv_2d(network, 384, 3, activation='relu')

network = conv_2d(network, 256, 3, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = fully_connected(network, 4096, activation='tanh')

network = dropout(network, 0.5)

network = fully_connected(network, 4096, activation='tanh')

network = dropout(network, 0.5)

network = fully_connected(network, num_classes, activation='softmax', restore=restore)

network = regression(network, optimizer='momentum',

loss='categorical_crossentropy',

learning_rate=0.001)

return network

# 这里,我们的训练从已经训练好的alexnet开始,即model_save.model开始读取。在训练后,我们

# 将训练资料收录到fine_tune_model_save.model里

def fine_tune_Alexnet(network, X, Y):

# Training

model = tflearn.DNN(network, checkpoint_path='rcnn_model_alexnet',

max_checkpoints=1, tensorboard_verbose=2, tensorboard_dir='output_RCNN')

if os.path.isfile('fine_tune_model_save.model'):

print("Loading the fine tuned model")

model.load('fine_tune_model_save.model')

elif os.path.isfile('model_save.model'):

print("Loading the alexnet")

model.load('model_save.model')

else:

print("No file to load, error")

return False

model.fit(X, Y, n_epoch=10, validation_set=0.1, shuffle=True,

show_metric=True, batch_size=64, snapshot_step=200,

snapshot_epoch=False, run_id='alexnet_rcnnflowers2') # epoch = 1000

# Save the model

model.save('fine_tune_model_save.model')

使用这两个函数来完成微调。至此,我们已经完成了pair的直接应用。接下来,我们需要读取最后一层特征并使用它们来训练 svm。那么,我们如何获得图片呢?方法很简单,我们只需减去输出层即可。代码如下:

# Use a already trained alexnet with the last layer redesigned

def create_alexnet(num_classes, restore=False):

# Building 'AlexNet'

network = input_data(shape=[None, 224, 224, 3])

network = conv_2d(network, 96, 11, strides=4, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = conv_2d(network, 256, 5, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = conv_2d(network, 384, 3, activation='relu')

network = conv_2d(network, 384, 3, activation='relu')

network = conv_2d(network, 256, 3, activation='relu')

network = max_pool_2d(network, 3, strides=2)

network = local_response_normalization(network)

network = fully_connected(network, 4096, activation='tanh')

network = dropout(network, 0.5)

network = fully_connected(network, 4096, activation='tanh')

network = regression(network, optimizer='momentum',

loss='categorical_crossentropy',

learning_rate=0.001)

return network

得到它之后,我们需要训练SVM。为什么要训练 SVM?直接用CNN好不好?前面提到的博客中提到了这个问题。总之,SVM适合小样本训练,这里这样做可以提高准确率。训练SVM的代码如下:

# Construct cascade svms

def train_svms(train_file_folder, model):

# 这里,我们将不同的训练集合分配到不同的txt文件里,每一个文件只含有一个种类

listings = os.listdir(train_file_folder)

svms = []

for train_file in listings:

if "pkl" in train_file:

continue

# 得到训练单一种类SVM的数据。

X, Y = generate_single_svm_train(train_file_folder+train_file)

train_features = []

for i in X:

feats = model.predict([i])

train_features.append(feats[0])

print("feature dimension")

print(np.shape(train_features))

# 这里建立一个Cascade的SVM以区分所有物体

clf = svm.LinearSVC()

print("fit svm")

clf.fit(train_features, Y)

svms.append(clf)

return svms

在识别物体时我们应该怎么做?首先,我们使用如下函数获取输入图像的疑似物体框:

def image_proposal(img_path):

img = skimage.io.imread(img_path)

img_lbl, regions = selectivesearch.selective_search(

img, scale=500, sigma=0.9, min_size=10)

candidates = set()

images = []

vertices = []

for r in regions:

# excluding same rectangle (with different segments)

if r['rect'] in candidates:

continue

if r['size'] < 220:

continue

# resize to 224 * 224 for input

proposal_img, proposal_vertice = prep.clip_pic(img, r['rect'])

# Delete Empty array

if len(proposal_img) == 0:

continue

# Ignore things contain 0 or not C contiguous array

x, y, w, h = r['rect']

if w == 0 or h == 0:

continue

# Check if any 0-dimension exist

[a, b, c] = np.shape(proposal_img)

if a == 0 or b == 0 or c == 0:

continue

im = Image.fromarray(proposal_img)

resized_proposal_img = resize_image(im, 224, 224)

candidates.add(r['rect'])

img_float = pil_to_nparray(resized_proposal_img)

images.append(img_float)

vertices.append(r['rect'])

return images, vertices

这个过程和预处理中的函数类似,但是更简单,因为我们不需要考虑对应的标签。之后我们将这些图片一张一张的输入到网络中得到相对的输出(其实我们可以一起做,但是我的电脑老是卡死,可能是内存或者其他问题),最后应用的SVM就可以了得到预测结果。

你一定对测试的结果非常好奇。以下结果与RCNN的运行结果进行对比。

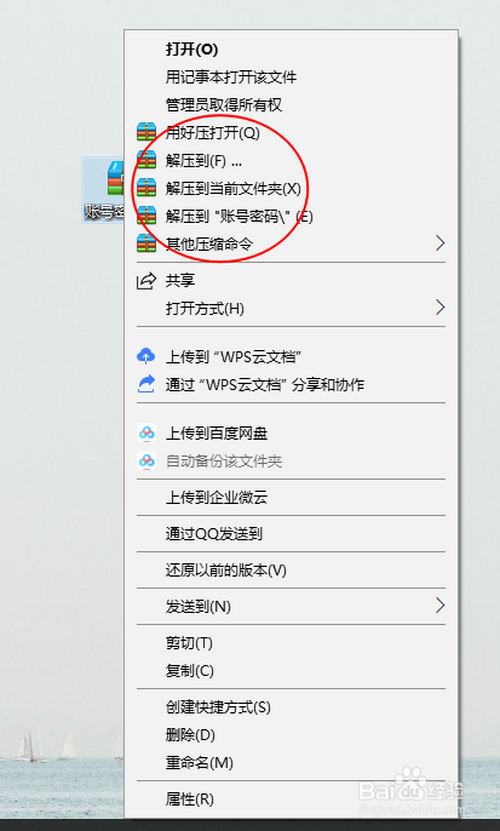

首先,让我们看一下下图的结果:

其分析结果如下: 的情况下,得到如下数据:

它被判断为第四类花。实际结果是 17 数据库中的最后一个类别,也就是第 17 类花卉。在这里,第 17 类在第 4 类之后有 34% 的机会成为花。那么,RCNN 的结果是什么?我们看下图:

很明显,RCNN(1类)的正确率非常高。有兴趣的可以点这里查看代码。

暂无评论内容