2022-02-14

参考资料:使用 -Learn、Keras 和 : 、工具和构建进行实践

l2_reg = keras.regularizers.l2(0.05)

model = keras.models.Sequential([

keras.layers.Dense(30, activation="elu", kernel_initializer="he_normal",

kernel_regularizer=l2_reg),

keras.layers.Dense(1, kernel_regularizer=l2_reg)

])

![图片[1]-手动实现TensorFlow的训练过程:示例-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194647886_0.gif)

n_epochs = 5

batch_size = 32

n_steps = len(X_train) // batch_size

optimizer = keras.optimizers.Nadam(lr=0.01)

loss_fn = keras.losses.mean_squared_error

mean_loss = keras.metrics.Mean()

metrics = [keras.metrics.MeanAbsoluteError()]

![图片[2]-手动实现TensorFlow的训练过程:示例-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194647886_1.gif)

for epoch in range(1, n_epochs + 1):

print("Epoch {}/{}".format(epoch, n_epochs))

for step in range(1, n_steps + 1):

X_batch, y_batch = random_batch(X_train_scaled, y_train)

with tf.GradientTape() as tape:

y_pred = model(X_batch)

![图片[3]-手动实现TensorFlow的训练过程:示例-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194647886_2.gif)

main_loss = tf.reduce_mean(loss_fn(y_batch, y_pred))

a = main_loss

b = model.losses

loss = tf.add_n([main_loss] + model.losses)

gradients = tape.gradient(loss, model.trainable_variables)

optimizer.apply_gradients(zip(gradients, model.trainable_variables))

for variable in model.variables:

![图片[4]-手动实现TensorFlow的训练过程:示例-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194647886_3.gif)

if variable.constraint is not None:

variable.assign(variable.constraint(variable))

c = loss

mean_loss(loss)

for metric in metrics:

metric(y_batch, y_pred)

print_status_bar(step * batch_size, len(y_train), mean_loss, metrics)

![图片[5]-手动实现TensorFlow的训练过程:示例-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655194647886_4.jpg)

print_status_bar(len(y_train), len(y_train), mean_loss, metrics)

for metric in [mean_loss] + metrics:

metric.reset_states()

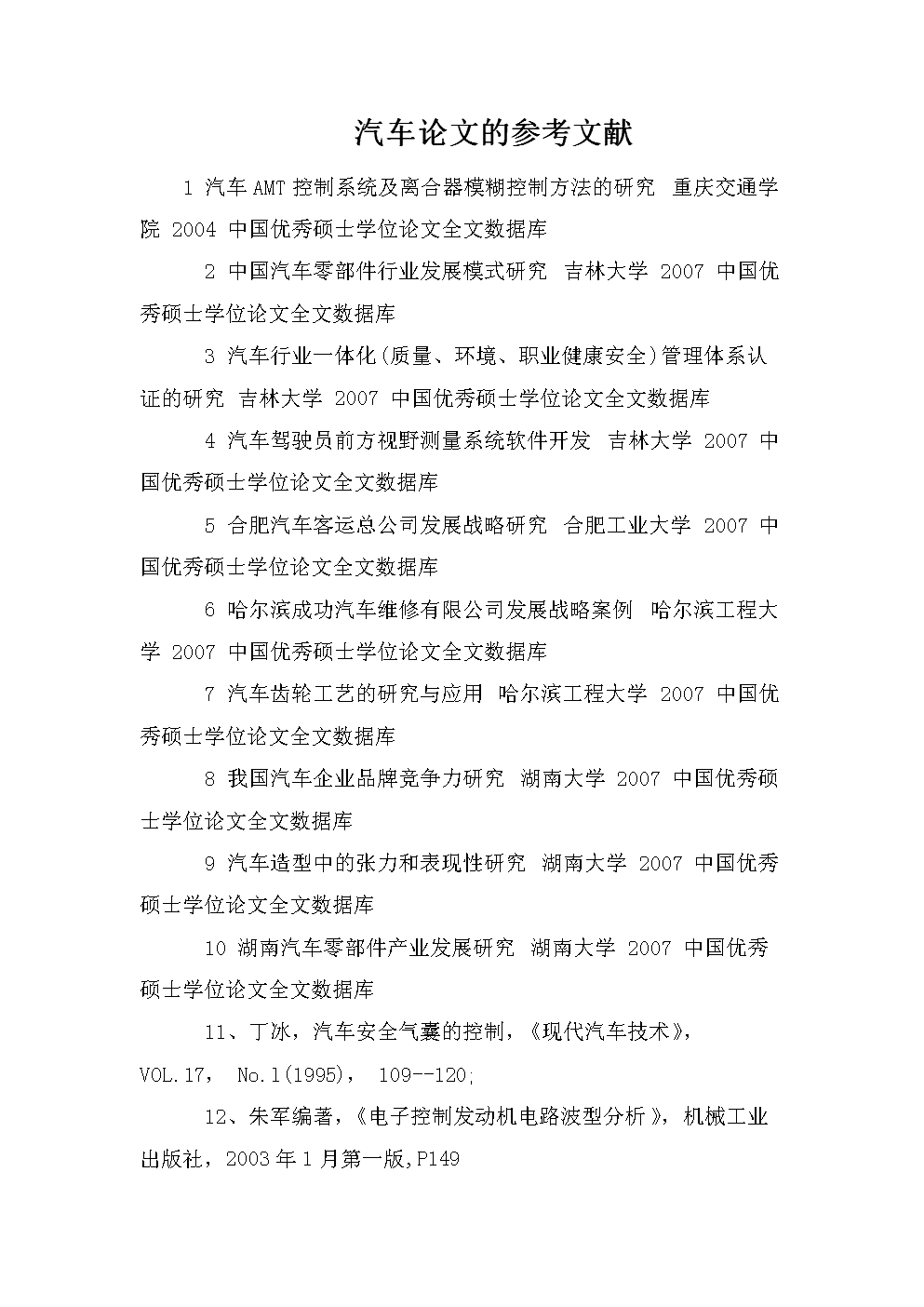

因为模型的存在,模型。是每一层的损失。总损失等于损失+损失。

分类:

技术要点:

相关文章:

© 版权声明

本站下载的源码均来自公开网络收集转发二次开发而来,

若侵犯了您的合法权益,请来信通知我们1413333033@qq.com,

我们会及时删除,给您带来的不便,我们深表歉意。

下载用户仅供学习交流,若使用商业用途,请购买正版授权,否则产生的一切后果将由下载用户自行承担,访问及下载者下载默认同意本站声明的免责申明,请合理使用切勿商用。

THE END

暂无评论内容