MNIST(手写识别)实例运行

首先,你必须有一个编译环境,并且已经正确编译安装。环境配置参考:

一、MNIST 运行1)先下载训练数据

四个包都下载下来,在下面代码的运行目录下创建一个目录,把四个包放在里面

train–idx3-ubyte.gz:设置(字节)

train–idx1-ubyte.gz:设置(28881字节)

t10k–idx3-ubyte.gz:测试集(字节)

t10k–idx1-ubyte.gz:测试集(4542字节)

当然你也可以不下载,前提是运行服务器可以正常访问下载目录。如果有问题,请参考【问题1)】解决)

2) MNIST 代码 A:旧版本(在官方教程中)

中文:

完整代码如下:mnist.py

import input_data

import tensorflow as tf

FLAGS = None

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

x = tf.placeholder("float",[None,784])

w = tf.Variable(tf.zeros([784,10]))

b = tf.Variable(tf.zeros([10]))

y = tf.nn.softmax(tf.matmul(x,w) + b)

y_ = tf.placeholder("float",[None,10])

cross_entroy = -tf.reduce_sum(y_ * tf.log(y))

train_step = tf.train.GradientDescentOptimizer(0.01).minimize(cross_entroy)

init = tf.initialize_all_variables()

sess = tf.Session()

sess.run(init)

for _ in range(1000):

batch_xs, batch_ys = mnist.train.next_batch(100)

sess.run(train_step,feed_dict ={x:batch_xs,y_:batch_ys})

correct_prediction = tf.equal(tf.argmax(y,1),tf.argmax(y_,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction,"float"))

print sess.run(accuracy, feed_dict={x:mnist.test.images, y_:mnist.test.labels})

.py

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import gzip

import os

import tempfile

import numpy

from six.moves import urllib

from six.moves import xrange # pylint: disable=redefined-builtin

import tensorflow as tf

from tensorflow.contrib.learn.python.learn.datasets.mnist import read_data_sets

运行

mnist.py

2)新版本.py

.py文件内容相同,.py文件不同

.py文件目录:

\\mnist.py

完整代码:

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

![图片[1]-TensorFlow MNIST(手写识别 softmax)实例运行-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655198377280_2.png)

import argparse

import sys

import os

os.environ['TF_CPP_MIN_LOG_LEVEL']='2'

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

FLAGS = None

def main(_):

# Import data

mnist = input_data.read_data_sets(FLAGS.data_dir, one_hot=True)

# Create the model

x = tf.placeholder(tf.float32, [None, 784])

W = tf.Variable(tf.zeros([784, 10]))

b = tf.Variable(tf.zeros([10]))

y = tf.matmul(x, W) + b

# Define loss and optimizer

y_ = tf.placeholder(tf.float32, [None, 10])

# The raw formulation of cross-entropy,

#

# tf.reduce_mean(-tf.reduce_sum(y_ * tf.log(tf.nn.softmax(y)),

# reduction_indices=[1]))

#

# can be numerically unstable.

#

# So here we use tf.nn.softmax_cross_entropy_with_logits on the raw

# outputs of 'y', and then average across the batch.

cross_entropy = tf.reduce_mean(

tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=y))

train_step = tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy)

sess = tf.InteractiveSession()

tf.global_variables_initializer().run()

# Train

for _ in range(1000):

batch_xs, batch_ys = mnist.train.next_batch(100)

sess.run(train_step, feed_dict={x: batch_xs, y_: batch_ys})

# Test trained model

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

print(sess.run(accuracy, feed_dict={x: mnist.test.images,

y_: mnist.test.labels}))

if __name__ == '__main__':

parser = argparse.ArgumentParser()

parser.add_argument('--data_dir', type=str, default='/tmp/tensorflow/mnist/input_data',

help='Directory for storing input data')

FLAGS, unparsed = parser.parse_known_args()

tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

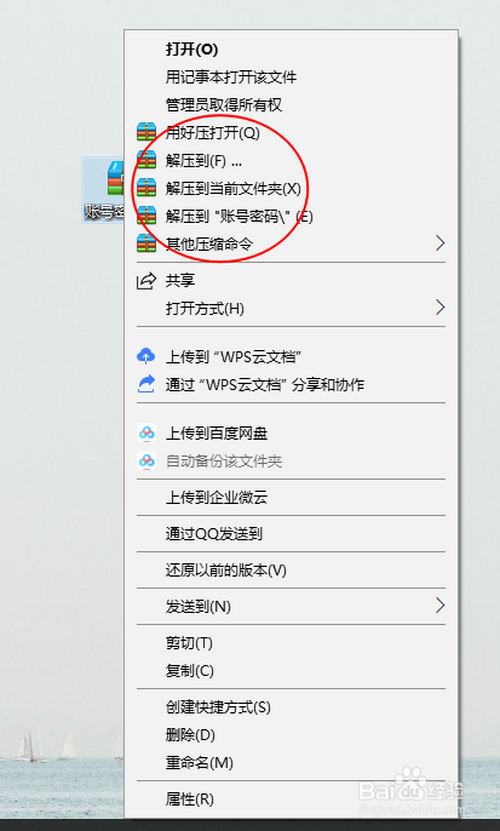

数据路径不同,把训练数据复制过去:

cp /*.gz /tmp//mnist//

运行:

.py

© 版权声明

本站下载的源码均来自公开网络收集转发二次开发而来,

若侵犯了您的合法权益,请来信通知我们1413333033@qq.com,

我们会及时删除,给您带来的不便,我们深表歉意。

下载用户仅供学习交流,若使用商业用途,请购买正版授权,否则产生的一切后果将由下载用户自行承担,访问及下载者下载默认同意本站声明的免责申明,请合理使用切勿商用。

THE END

暂无评论内容