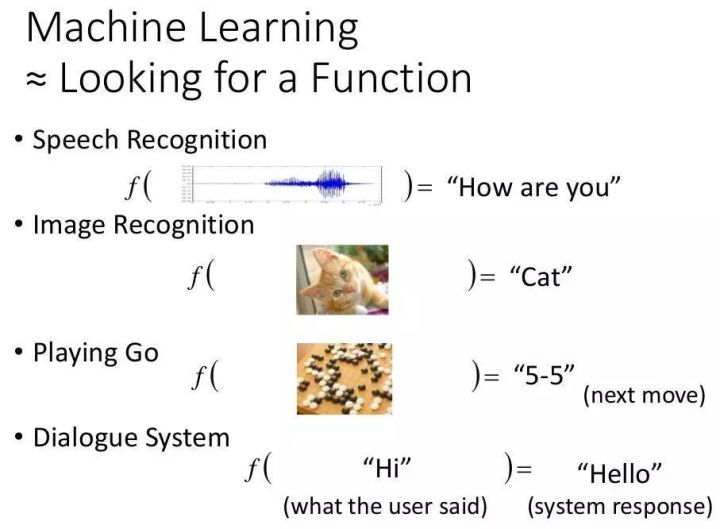

数学上的回归是指现实中的变量之间存在函数关系,而这种函数关系是通过一批样本数据找到的,即用样本数据回归到真实的函数关系。

线性回归/是指一些变量之间存在线性关系,这种关系是通过一批样本数据找到的。线性关系函数的图形是一条直线。

线性函数方程如下:

y = wx + by=wx+b

线性回归就是根据一批样本数据确定这个方程,即确定权重ww和偏差bb。

因此,要创建线性模型,您需要:

让我们开始建立一个线性模型:

import tensorflow.compat.v1 as tf import numpy as np tf.compat.v1.disable_eager_execution() # 为参数斜率(W)创建变量,初始值为0.4 W = tf.Variable([.4], tf.float32) # 为参数截距(b)创建变量,初始值为-0.4 b = tf.Variable([-0.4], tf.float32) # 为自变量(用x表示)创建占位符 x = tf.placeholder(tf.float32) # 线性回归方程 linear_model = W * x + b # 初始化所有变量 sess = tf.compat.v1.Session() init = tf.compat.v1.global_variables_initializer() sess.run(init) # 运行回归模型,输出y值 print(sess.run(linear_model, feed_dict={x: [1, 2, 3, 4]}))

输出

C:Anaconda3python.exe "C:Program FilesJetBrainsPyCharm 2019.1.1helperspydevpydevconsole.py" --mode=client --port=60639 import sys; print('Python %s on %s' % (sys.version, sys.platform)) sys.path.extend(['C:\app\PycharmProjects', 'C:/app/PycharmProjects']) Python 3.7.6 (default, Jan 8 2020, 20:23:39) [MSC v.1916 64 bit (AMD64)] Type 'copyright', 'credits' or 'license' for more information IPython 7.12.0 -- An enhanced Interactive Python. Type '?' for help. PyDev console: using IPython 7.12.0 Python 3.7.6 (default, Jan 8 2020, 20:23:39) [MSC v.1916 64 bit (AMD64)] on win32 runfile('C:/app/PycharmProjects/ArtificialIntelligence/test.py', wdir='C:/app/PycharmProjects/ArtificialIntelligence') WARNING:tensorflow:From C:Anaconda3libsite-packagestensorflowpythonopsresource_variable_ops.py:1666: calling BaseResourceVariable.__init__ (from tensorflow.python.ops.resource_variable_ops) with constraint is deprecated and will be removed in a future version. Instructions for updating: If using Keras pass *_constraint arguments to layers. 2020-06-19 18:08:36.592548: I tensorflow/core/platform/cpu_feature_guard.cc:143] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2 2020-06-19 18:08:36.612575: I tensorflow/compiler/xla/service/service.cc:168] XLA service 0x1a941da5370 initialized for platform Host (this does not guarantee that XLA will be used). Devices: 2020-06-19 18:08:36.614292: I tensorflow/compiler/xla/service/service.cc:176] StreamExecutor device (0): Host, Default Version [0. 0.4 0.8000001 1.2 ]

上面的代码就是按照线性方程,输入x值,输出y值。

我们需要使用样本数据训练权重w和偏差b,根据输出的y值计算误差(预测结果与已知结果的差值),得到代价函数,利用梯度下降法得到找到重置成本函数的最小值,得到最终的权重w和偏差b。

成本函数

成本函数用于衡量模型的实际输出与预期输出之间的差距。我们将使用通常的均方误差作为成本函数:

E = frac{1}{2}(t – y)^2E=21(t–y)2

# y占位符,接受样本中的y值 y = tf.placeholder(tf.float32) # 计算均方差 error = linear_model - y squared_errors = tf.square(error) loss = tf.reduce_sum(squared_errors) # 打印误差 print(sess.run(loss, feed_dict = {x:[1, 2, 3, 4], y:[2, 4, 6, 8]}))

完整代码

![图片[1]-人工智能深度学习入门练习之(25)TensorFlow – 例子:线性回归-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655198256421_2.png)

import tensorflow.compat.v1 as tf import numpy as np tf.compat.v1.disable_eager_execution() # 为参数斜率(W)创建变量,初始值为0.4 W = tf.Variable([.4], tf.float32) # 为参数截距(b)创建变量,初始值为-0.4 b = tf.Variable([-0.4], tf.float32) # 为自变量(用x表示)创建占位符 x = tf.placeholder(tf.float32) # 线性回归方程 linear_model = W * x + b # 初始化所有变量 sess = tf.compat.v1.Session() init = tf.compat.v1.global_variables_initializer() sess.run(init) # 运行回归模型,输出y值 print(sess.run(linear_model, feed_dict={x: [1, 2, 3, 4]})) # y占位符,接受样本中的y值 y = tf.placeholder(tf.float32) # 计算均方差 error = linear_model - y squared_errors = tf.square(error) loss = tf.reduce_sum(squared_errors) # 打印误差 print(sess.run(loss, feed_dict = {x:[1, 2, 3, 4], y:[2, 4, 6, 8]}))

输出

![图片[2]-人工智能深度学习入门练习之(25)TensorFlow – 例子:线性回归-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655198256421_3.png)

C:Anaconda3python.exe "C:Program FilesJetBrainsPyCharm 2019.1.1helperspydevpydevconsole.py" --mode=client --port=64343 import sys; print('Python %s on %s' % (sys.version, sys.platform)) sys.path.extend(['C:\app\PycharmProjects', 'C:/app/PycharmProjects']) Python 3.7.6 (default, Jan 8 2020, 20:23:39) [MSC v.1916 64 bit (AMD64)] Type 'copyright', 'credits' or 'license' for more information IPython 7.12.0 -- An enhanced Interactive Python. Type '?' for help. PyDev console: using IPython 7.12.0 Python 3.7.6 (default, Jan 8 2020, 20:23:39) [MSC v.1916 64 bit (AMD64)] on win32 runfile('C:/app/PycharmProjects/ArtificialIntelligence/test.py', wdir='C:/app/PycharmProjects/ArtificialIntelligence') WARNING:tensorflow:From C:Anaconda3libsite-packagestensorflowpythonopsresource_variable_ops.py:1666: calling BaseResourceVariable.__init__ (from tensorflow.python.ops.resource_variable_ops) with constraint is deprecated and will be removed in a future version. Instructions for updating: If using Keras pass *_constraint arguments to layers. 2020-06-19 18:15:29.396415: I tensorflow/core/platform/cpu_feature_guard.cc:143] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2 2020-06-19 18:15:29.415583: I tensorflow/compiler/xla/service/service.cc:168] XLA service 0x17166c35f50 initialized for platform Host (this does not guarantee that XLA will be used). Devices: 2020-06-19 18:15:29.417842: I tensorflow/compiler/xla/service/service.cc:176] StreamExecutor device (0): Host, Default Version [0. 0.4 0.8000001 1.2 ] 90.24

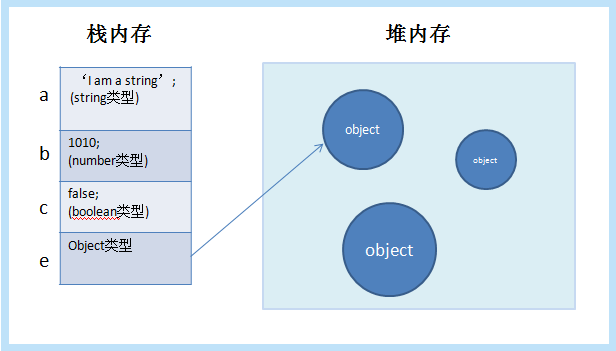

可以看出输出的误差值非常大。因此,我们需要调整权重(W)和偏差(b)来减少误差。

模型训练

提供了一个优化器,可以缓慢地改变每个变量(权重 w,偏差 b),从而最小化成本函数。

最简单的优化器是梯度下降优化器,它根据代价函数对变量的变化率(导数)来修改对应的变量,迭代得到代价函数的最小值。

# 创建梯度下降优化器实例,学习率为0.01 optimizer = tf.train.GradientDescentOptimizer(0.01) # 使用优化器最小化代价函数 train = optimizer.minimize(loss) # 在1000次迭代中最小化误差,这样在迭代时,将使用优化器根据误差修改模型参数w & b以最小化误差 for i in range(1000): sess.run(train, {x:[1, 2, 3, 4], y:[2, 4, 6, 8]}) # 打印权重和偏差print(sess.run([W, b]))

完整代码:

import tensorflow.compat.v1 as tf import numpy as np tf.compat.v1.disable_eager_execution() # 为参数斜率(W)创建变量,初始值为0.4 W = tf.Variable([.4], tf.float32) # 为参数截距(b)创建变量,初始值为-0.4 b = tf.Variable([-0.4], tf.float32) # 为自变量(用x表示)创建占位符 x = tf.placeholder(tf.float32) # 线性回归方程 linear_model = W * x + b # 初始化所有变量 sess = tf.compat.v1.Session() init = tf.compat.v1.global_variables_initializer() sess.run(init) # 运行回归模型,输出y值 print(sess.run(linear_model, feed_dict={x: [1, 2, 3, 4]})) # y占位符,接受样本中的y值 y = tf.placeholder(tf.float32) # 计算均方差 error = linear_model - y squared_errors = tf.square(error) loss = tf.reduce_sum(squared_errors) # 打印误差print(sess.run(loss, feed_dict = {x:[1, 2, 3, 4], y:[2, 4, 6, 8]})) # 创建梯度下降优化器实例,学习率为0.01 optimizer = tf.train.GradientDescentOptimizer(0.01) # 使用优化器最小化代价函数 train = optimizer.minimize(loss) # 在1000次迭代中最小化误差,这样在迭代时,将使用优化器根据误差修改模型参数w & b以最小化误差 for i in range(1000): sess.run(train, {x:[1, 2, 3, 4], y:[2, 4, 6, 8]}) # 打印权重和偏差 print(sess.run([W, b]))

输出

C:Anaconda3python.exe "C:Program FilesJetBrainsPyCharm 2019.1.1helperspydevpydevconsole.py" --mode=client --port=64387 import sys; print('Python %s on %s' % (sys.version, sys.platform)) sys.path.extend(['C:\app\PycharmProjects', 'C:/app/PycharmProjects']) Python 3.7.6 (default, Jan 8 2020, 20:23:39) [MSC v.1916 64 bit (AMD64)] Type 'copyright', 'credits' or 'license' for more information IPython 7.12.0 -- An enhanced Interactive Python. Type '?' for help. PyDev console: using IPython 7.12.0 Python 3.7.6 (default, Jan 8 2020, 20:23:39) [MSC v.1916 64 bit (AMD64)] on win32 runfile('C:/app/PycharmProjects/ArtificialIntelligence/test.py', wdir='C:/app/PycharmProjects/ArtificialIntelligence') WARNING:tensorflow:From C:Anaconda3libsite-packagestensorflowpythonopsresource_variable_ops.py:1666: calling BaseResourceVariable.__init__ (from tensorflow.python.ops.resource_variable_ops) with constraint is deprecated and will be removed in a future version. Instructions for updating: If using Keras pass *_constraint arguments to layers. 2020-06-19 18:16:36.829150: I tensorflow/core/platform/cpu_feature_guard.cc:143] Your CPU supports instructions that this TensorFlow binary was not compiled to use: AVX2 2020-06-19 18:16:36.848335: I tensorflow/compiler/xla/service/service.cc:168] XLA service 0x193806eb120 initialized for platform Host (this does not guarantee that XLA will be used). Devices: 2020-06-19 18:16:36.850094: I tensorflow/compiler/xla/service/service.cc:176] StreamExecutor device (0): Host, Default Version [0. 0.4 0.8000001 1.2 ] 90.24 [array([1.9999996], dtype=float32), array([9.863052e-07], dtype=float32)]

© 版权声明

本站下载的源码均来自公开网络收集转发二次开发而来,

若侵犯了您的合法权益,请来信通知我们1413333033@qq.com,

我们会及时删除,给您带来的不便,我们深表歉意。

下载用户仅供学习交流,若使用商业用途,请购买正版授权,否则产生的一切后果将由下载用户自行承担,访问及下载者下载默认同意本站声明的免责申明,请合理使用切勿商用。

THE END

![图片[3]-人工智能深度学习入门练习之(25)TensorFlow – 例子:线性回归-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655198256421_4.gif)

![图片[4]-人工智能深度学习入门练习之(25)TensorFlow – 例子:线性回归-唐朝资源网](https://images.43s.cn/wp-content/uploads//2022/06/1655198256421_5.gif)

暂无评论内容